Broker Scorecards in 12 Minutes: An Automation Walkthrough

The quarterly broker review used to take a full day of data wrangling. Here's what the pipeline looks like when you stop doing it by hand.

By Zach Newton · Feb 22, 2026

At Mezzetta, broker scorecards were a quarterly event. Three brokers across different regions, each with their own reporting format, their own definition of "on-time," and their own way of tracking distribution gains.

Building the scorecards meant pulling sell-through from UNFI and KeHE, cross-referencing against the promo calendar, calculating fill rates from our internal system, and assembling it all into a format that actually told you something about performance.

It took about a day. Sometimes more, if the data was messy — and the data was always messy.

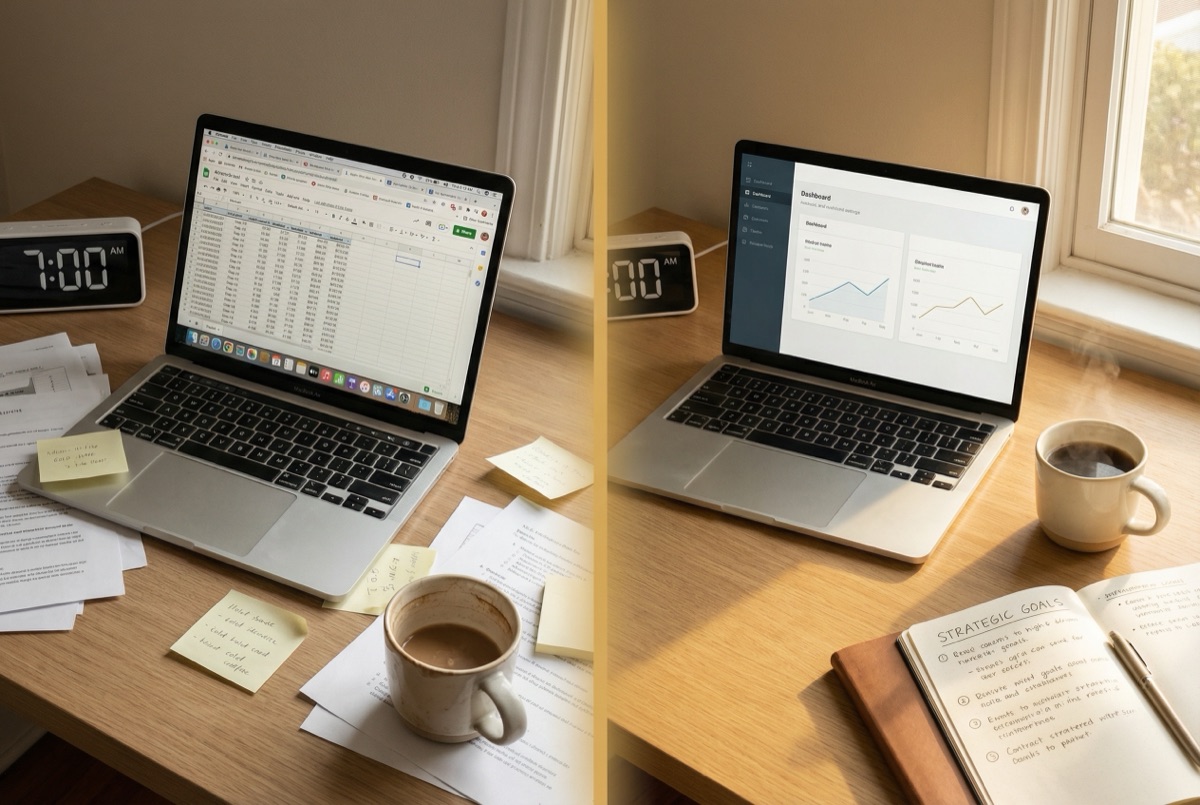

What the manual process looks like

Here's the workflow, for anyone who's lived it:

- Pull distributor data. Log into the KeHE portal. Export the sell-through report. Do the same for UNFI. If you're on DSD, pull from the DSD distributor's portal too.

- Normalize. Each distributor uses different SKU identifiers, different time periods, different category hierarchies. You spend 30-45 minutes just getting the data into a consistent format.

- Pull promo data. Open the trade promo tracker — usually a Google Sheet or Excel file that someone on the sales team maintains. Match promo periods to sell-through windows.

- Calculate metrics. Distribution gains/losses. ACV changes. Promo lift by retailer. Fill rate by SKU. Year-over-year velocity.

- Assemble the scorecard. Copy everything into the template. Add commentary. Flag the conversations you need to have. "Why did ACV drop at Kroger Southeast?" "The BOGO at Sprouts delivered 0.8x lift — below threshold."

- Send it. Schedule the review call. Prepare talking points.

Elapsed time: 6-8 hours. Four times a year. Across three brokers, that's 72-96 hours annually on scorecard assembly.

What the automated version looks like

The data sources don't change. UNFI still has their portal. KeHE still exports CSVs. Your promo calendar is still a spreadsheet. What changes is who does the wrangling.

The pipeline:

- A scheduled job pulls sell-through data from each distributor portal. For KeHE, that's their API. For UNFI, it's an automated export download. For DSD distributors with no API, it's a lightweight RPA script that logs in and downloads the report.

- A transformation step normalizes everything — maps distributor SKUs to your internal product codes, aligns time periods, handles the quarterly retailer hierarchy changes.

- The analysis runs against your promo calendar. Lift calculations, distribution tracking, fill rate trending.

- A scorecard template populates automatically. Commentary gets drafted by an LLM that's been trained on your previous scorecards and knows what you care about — it flags the anomalies, not just the numbers.

Elapsed time: 12 minutes of pipeline execution. 20 minutes of human review and commentary editing. Done before your first meeting of the day.

Where the ROI lives

The time savings are obvious: 72+ hours per year reclaimed. At a $55/hour loaded rate, that's roughly $4,000 in direct salary savings. Nice, but not transformative.

The real value is in what happens with the data between quarterly reviews.

When scorecard assembly is automated, there's no reason it has to be quarterly. Run it monthly. Run it weekly. Suddenly you're catching the ACV drop at Kroger Southeast three weeks after it happens instead of three months. You're flagging the underperforming promo while there's still time to adjust the next one.

The information was always there. You just couldn't get to it fast enough when every pull was manual.

What it takes to build this

The technical components are straightforward:

- Data connectors: API integrations for platforms that have them, scheduled browser automation for those that don't. This isn't cutting-edge — it's plumbing.

- Data transformation: A cleaning and normalization layer. Python scripts, or a lightweight ETL tool like dbt if you want to get fancy.

- Analysis engine: SQL queries or Python calculations against normalized data. Nothing that requires a data science PhD.

- Output layer: Template population and LLM-assisted commentary. Google Slides API, or a custom report generator.

The hard part isn't any single component. It's knowing which distributor portals have quirks (KeHE's export drops organic category codes every Q3), which metrics your brokers actually respond to (hint: it's not the ones in the standard template), and how to scope the project so it ships in weeks instead of quarters.

That's operator knowledge, not engineering knowledge. And that's why this kind of work sits at the intersection of CPG experience and technical capability.

The broker scorecard pipeline is one of the most common custom builds we do at NWTN AI. If your team is spending a full day on quarterly scorecards, let's scope what automation looks like for your broker network.

10 years in CPG operations — from KRAVE Jerky to Mezzetta. Now helps CPG brands navigate the AI landscape — evaluating, bringing in, and integrating the right tools.

LinkedIn ↗See what 10 hours of manual work looks like automated.

Book a Free Audit Call →